“There is nothing so useless as doing efficiently that which should not be done at all.”

— Peter Drucker

If you work in product management, product development or just in technology or software at all, you’ve probably heard of the term ‘MVP’ or Minimum Viable Product. Everyone is using it these days.

The term MVP was coined in 2001 by Frank Robinson and later popularised by Eric Ries’s The Lean Start-Up. It’s good because it gives us a way to know that what we’re doing matters.

The idea is simple: start learning now, use the smallest amount of effort to build the next thing that will be sustainable.

- Take a hypothesis, e.g. “I think, moving the sign up form to the top of the page will lead to more sales” Build something that tests this hypothesis.

- Measure the outcome.

- Learn. Did what you thought would happen, happen? If so, great — now go to step 1. Otherwise … go to step 1. Take these learnings with you.

Keep doing this until you decide you might be doing the wrong thing, and at which point, go to step 1 and start with a bigger hypothesis (sometimes called pivoting.)

But, despite its simplicity and popularity, there’s some critical misunderstandings of MVP. Some people see it as a way to drive truly iterative, hypothesis-based development, to make sure they’re building the right thing. But others, mostly those unfamiliar with its origins, hear what they want to hear and see it as a shortcut to implement whatever it was they wanted to do anyway, almost regardless of what any data says. They seem to think it just means “my first version”. And so it has become another tool-turned-weapon used against teams, rather than the force for good it was once hoped to be.

So, here’s some signs that you, your PM, or your team, might be doing MVP wrong, and some ways to do it “properly”.

Signs of MVP Abuse

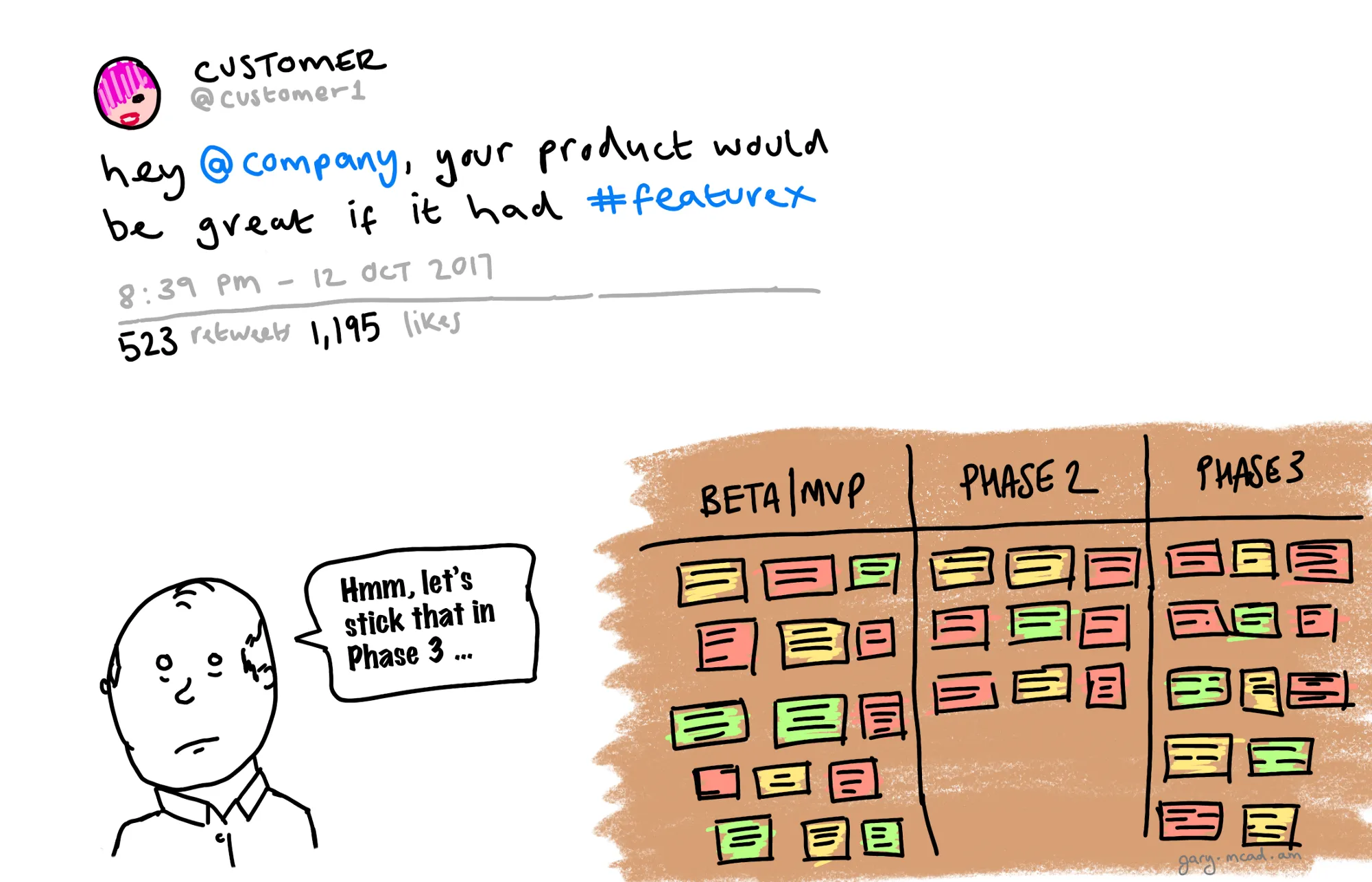

Scope negotiation — rather than being about validating an experiment, it becomes a way to negotiate scope, “just one more thing for MVP” or, “can we pull this into the MVP and this to phase 2”.

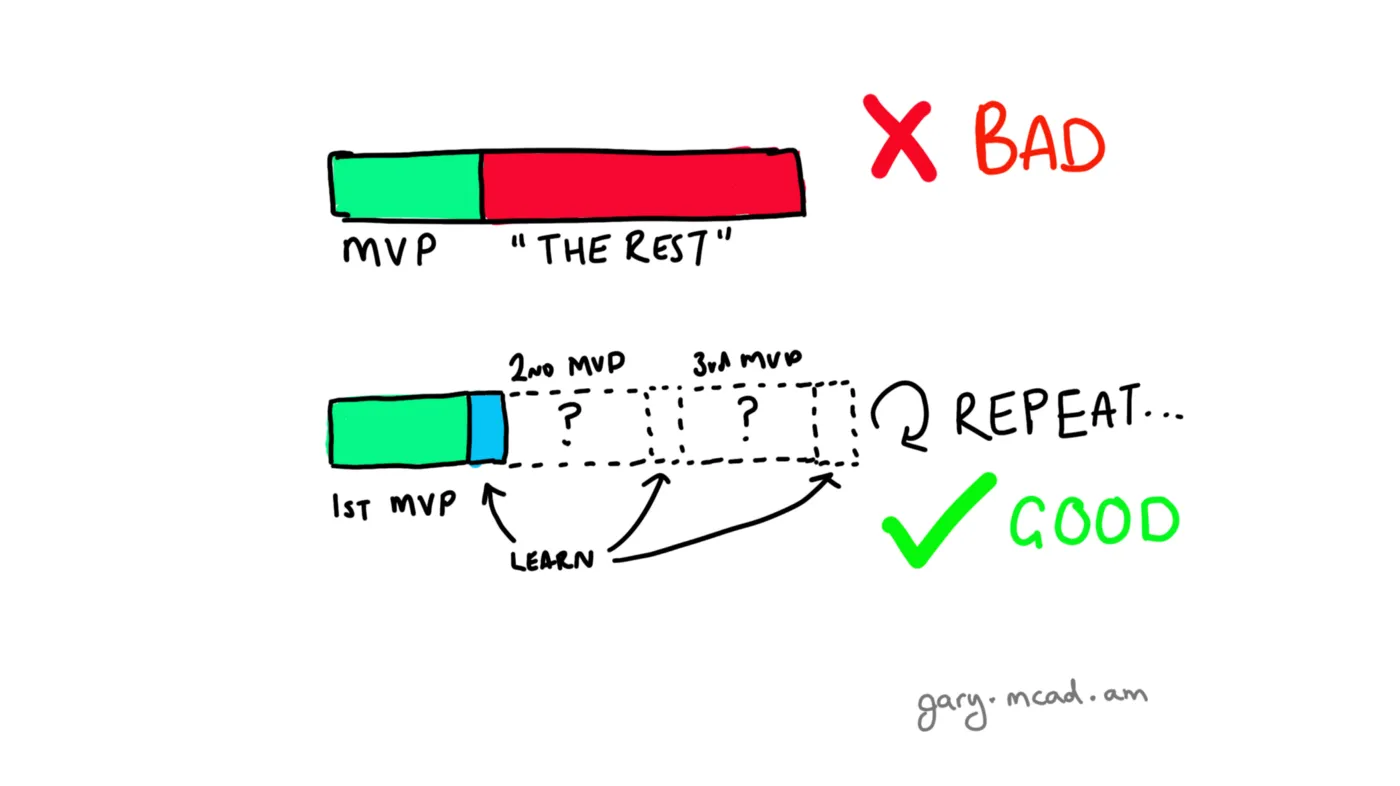

Just a single MVP — MVP is a process, not a goal. You don’t “reach the MVP”. There’s no “the MVP” and “the rest of the product”, because this assumes that your product is at some point finished — which means there’s no room for improvement, no more learning, no more features. That doesn’t sound great.

Phrases like “beta” and “alpha”, or “phase 1, 2, n…” — these are red flags. It suggests that extra work has already been planned way ahead of time and regardless of what you learn, that work is going to be implemented. Huge no-no. If we don’t learn, we can’t improve. We’re doomed to implement the wrong thing, over and over again.

No validation, no data — this is another red flag, if your hypothesis has no data, it’s likely that the conclusion has already been reached, or at least will be reached without proper validation. Also, write down your hypothesis first, don’t fall foul of bias caused by post-hoc theorising.

Good MVP Practises

Continuous feedback loops, of build, measure and learn; this continues ad infinitum, or until you realise that you need to pivot. The quicker the loop closes, the faster you learn and the quicker you can act.

Data-driven — you need real data to back up your hypothesis, start collecting as much as you can now. Avoid vanity metrics; instead favour innovation accounting. If you don’t measure what you’re doing, you can’t tell what direction you’re going in.

A way to test a hypothesis — using the data you’ve collected, validate a single hypothesis. Understand whether your hypothesis has been proven, and then iterate. Again, write down your hypothesis first!

Doesn’t have to be functional, or even a product — just has to prove the hypothesis. Dropbox is a famous example of an MVP of a product that wasn’t ready for use, but instead of risking crushing their product, CEO Drew Houston created a demo video and submitted it to Digg, this turned their “beta” waiting list from 5,000 to 75,000 people overnight. It’s an incredible story, and the rest, as they say, is history. Here’s an important quote from Dropbox’s Startup Lessons Learned presentation in 2010:

“[The] biggest risk [is] making something that no one wants […] Not launching is painful, but not learning is fatal […] Put something in users hands, [it] doesn’t have to be code, and get real feedback [as soon as possible.]”

— Drew Houston, Dropbox CEO

LEARN FAST — it’s not just about hitting a deadline and pleasing your boss, it’s actually about getting feedback from your users as early as you can, so that you can learn about them and your product. The quicker you can do this, the quicker you can fail or succeed. To summarise:

“The only way to win is to learn faster than anyone else.”

— Eric Ries, The Lean Startup

But what about long term plans?

A common criticism of MVP is that you can’t do long term planning if you are working with an MVP — well that’s not strictly true. Sure, you focus more on the now problems and getting feedback, but you have to know in which direction you’re going, and you need a long-term vision that you’re aiming for.

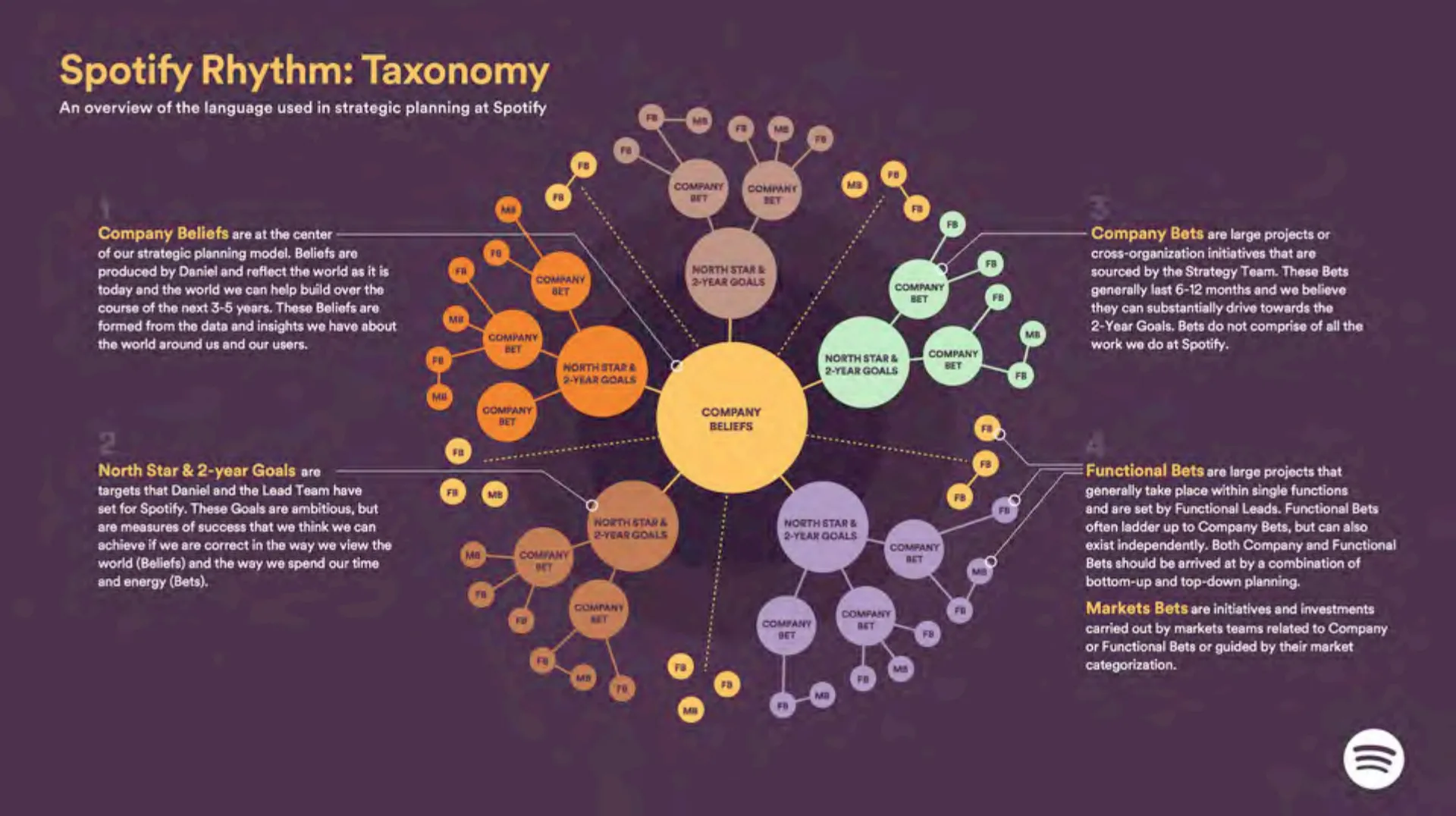

Spotify has notoriously been battling with this problem for a long time, and one way in which they have tackled this issue is something they call Spotify Rhythm. It’s a way for their small teams to stay aligned with the long-term beliefs of Spotify, while still iterating and focusing on the user.

It starts at the core with the company believes, and spans from there, to North Star (2-year) goals, and then form those more focused Company Bets (6–12 months), and then from those, functional bets — everything in here is also data-driven, leading to insight, backing up a belief, underpinned by a bet — what Spotify calls a DIBB. And while the language is different, it should sound familiar!

Credit: Spotify Rhythm: Taxonomy; Henrik Kniberg

But you don’t need a large scale framework for your long-term planning, this is just one example of how a very large organisation does it.

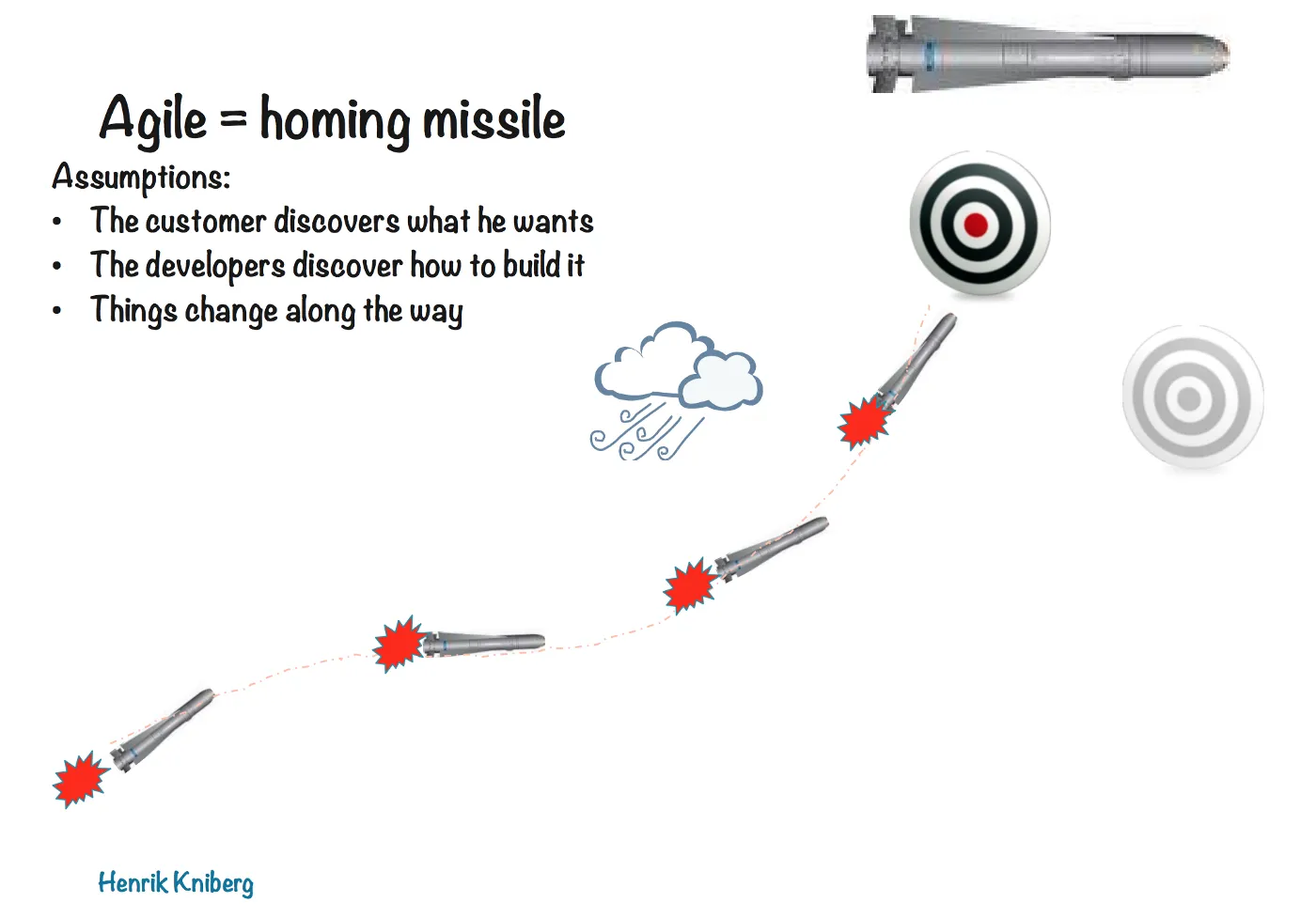

The best way to think of your product is as a homing missile. Start with a target, measure as much as you can, learn as much as you can, and accept that it changes along the way.

Credit: Henrik Kniberg, “What is Agile”

I’d like to finish with a wonderful excerpt from an interview by Reid Hoffman from the LinkedIn Speaker Series with The Lean Startup’s Eric Ries:

You think the work that you do matters, prove it. I’m sure you’re right, but let’s double-check. What’s the evidence that the work you accomplished today actually matters. […]

“I pulled a story off a story board, that was in prioritised order, and I built it according to the specifications of the product owner.” How do I know the product owner knows what the customer wants? I don’t know. Seems like he does. Seems like a smart guy. Was he right the last time? I don’t know. Did we measure even what happened the last time? Uh-oh. How many months of my life have I been pulling stories off a queue prioritised by a guy who doesn’t know what the customer wants. That doesn’t sound very good. […]

Think about all the people who are wasting their life doing this kabuki dance to pretend that we know what’s going on. How much more satisfying would it be, if every time we did an action, we knew for sure that either worked or it didn’t work; and if it didn’t work, we had the opportunity to learn immediately and influence the next action.

Nothing’s safe. Your life is precious, and you owe it to yourself to have an impact. And you can’t rely on somebody else to tell you what’s gonna have an impact or not, because nobody really knows. So given that nobody knows, why not double-check?

Watch the full interview, or skip straight to the above excerpt.

Resources and Further Reading

- http://www.syncdev.com/minimum-viable-product/

- Ries, E (2011) The Lean Startup; Crown Business

- http://www.startuplessonslearned.com/2009/03/minimum-viable-product.html

- Agile: where are we at? — Henrik Kniberg https://www.youtube.com/watch?v=gILDSmm5Pjc

- What is Agile? — Henrik Kniberg http://blog.crisp.se/wp-content/uploads/2013/08/20130820-What-is-Agile.pdf

- Spotify Rhythm — http://blog.crisp.se/wp-content/uploads/2016/06/Spotify-Rhythm-Agila-Sverige.pdf

- Vanity Metrics vs Actionable Metrics by Eric Ries https://tim.blog/2009/05/19/vanity-metrics-vs-actionable-metrics/

- The Lean Startup | Metholodigies — http://theleanstartup.com/principles